Stereotype Analysis in AI Generated Images of Older Adults: A Comparative Study

- 1. Hospital Central de la Cruz Roja, Spain

Abstract

Purpose: To analyze how AI represents older adults, identifying potential stereotypical patterns using objective visual content analysis and perceptual dimensions of valence and dominance.

Methods: Eight images were generated by AI using the prompt: “Create a hyperrealistic image of an 85-year-old woman and man.” The images were analyzed across eight dimensions: facial expression, posture, environment, clothing, color palette, dominant stereotypes, ethnic diversity, and perceptual variables (valence and dominance).

Results: All images (100%) depicted Caucasian phenotypes, with neutral or slightly sad expressions and static postures. According to the Oosterhof Todorov framework, the images predominantly occupied quadrants of low valence and low dominance, associated with vulnerability.

Conclusion: AI generates homogeneous representations of aging with low emotional and ethnic variability. The use of validated perceptual models allows evidence of how AI reinforces ageist stereotypes under the guise of aesthetic neutrality.

Keywords

• Stereotype; AI-Generated; Images; Older Adults

Citation

Rubio YA (2026) Stereotype Analysis in AI-Generated Images of Older Adults: A Comparative Study. J Aging Age Relat Dis 5(1): 1009.

INTRODUCTION

The visual representation of aging in emerging technologies is a key field for understanding societal imaginaries of older adults. In recent years, generative artificial intelligence (AI) has become an active agent in producing synthetic images used in advertising, media, and institutional communications. Several studies warn that these technologies replicate existing social biases, including visual ageism [1-3]. Recent research highlights the underrepresentation of older adults (only 2.5% compared to 57% of young adults aged 25–34) [2], and their association with passivity or dependency. This phenomenon has been termed “generative ageism” [1]. It is therefore essential to examine how different AI models respond to the same prompt (a set of instructions given to an AI model to perform a task) and what representations of aging they produce. Oosterhof and Todorov (2008) [4], demonstrated that social evaluation of faces can be described using two axes: valence (trustworthiness/pleasantness) and dominance (strength/physical power). These axes explain most social judgments of facial images and can be applied to the evaluation of AI-generated images. This study presents a comparative analysis of eight AI-generated images to identify stereotypes, explore differences among models, and relate findings to the literature on visual ageism.

MATERIALS AND METHODS

A comparative visual content analysis was conducted on eight images generated from the controlled prompt “Create a hyperrealistic image of an 85-year-old person,” using four AI models: ChatGPT (Generative Pre-trained Transformer, OpenAI, California, USA), GROK (xAI, California, USA), Gemini (Google, California, USA), and Copilot (Microsoft, Washington, USA). Each AI was requested to produce one male and one female image.

ANALYSIS

The images were coded across the following dimensions: Facial Expression (seriousness, tenderness, dynamism). Posture and Activity (static, active, interactive). Environment (neutral, domestic, social, natural). Clothing (classic, modern, stylistic diversity). Color Palette (cool, warm, high-contrast). Dominant Stereotypes (fragility, passivity, wisdom, tenderness). Ethnic Diversity (presence or absence of phenotypic variety and roles). Perceptual Variables [4]: valence (pleasantness or trust conveyed by the face) and dominance (perceived strength or control). Following Mehrabian’s emotional model [5], the activation–passivity axis was used as a complementary interpretive framework. The combination of facial expression, body posture, and context allowed a global classification of images as low activation (absence of intense emotions, broad gestures, or movement) and high passivity (predominance of static positions and lack of environmental interaction).

RESULTS

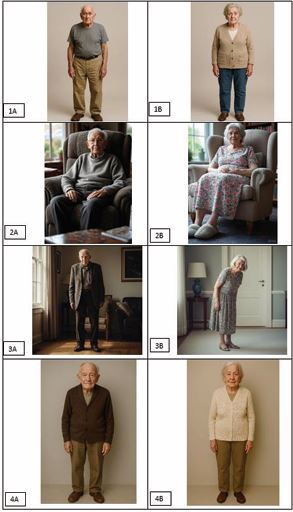

RESULTS The images showed low diversity of representations (Figure 1).

Figure 1 Images generated from the prompt “Create a hyperrealistic image of an 85-year-old person” arranged in pairs: male (A) and female (B) for each AI model (numbered 1 (ChatGPT), 2 (GROK), 3 (Gemini), 4 (Copilot)).

All images depicted Caucasian individuals without phenotypic variation. Facial expressions were predominantly serious or neutral, with no displays of joy or surprise. Approximately 90% of postures were static, without gestures or interaction. According to Mehrabian [5], they fall within low activation and high passivity. Environments were neutral or domestic backgrounds, clothing was sober, and no elements conveyed modernity, dynamism, or cultural diversity. These findings support prior studies on invisibility and ageist biases in AI [1-3]. All images showed low activity (absence of meaningful actions) and predominantly muted color palettes, reinforcing perceptions of deterioration or inactivity. In the Oosterhof & Todorov two-dimensional space [4], valence was low (neutral expressions with features associated with mild sadness or lack of interaction: downturned lips, vacant gaze) and dominance was low (slouched postures, indirect gazes, absence of expansive gestures). Gender differences were noted: women conveyed vulnerability or tenderness, whereas men exhibited medium dominance but low valence, associated with severity or distant wisdom. Consequently, AI images were linked to perceptions of fragility, passivity, and dependence. Stylistic differences among AI models were minor: Gemini introduced dramatic lighting (high contrast) but maintained low valence; Copilot produced more neutral faces; GROK and ChatGPT reproduced almost identical configurations of emotional activation.

DISCUSSION

The results confirm warnings in the literature regarding generative ageism. AI-generated images reduce aging to passivity, fragility, and deterioration, reproducing classical stereotypes. This aligns with findings by Linares-Lanzman et al. (2023–2025) [2], showing that AI rarely represents older adults, and when it does, it portrays dependency and lack of diversity. Phenotypic homogeneity (Caucasian individuals) obscures ethnic plurality, consistent with other studies on the lack of representativeness in AI training datasets. Predominantly serious or neutral faces, according to FACS [6], are associated with sadness or neutrality, conveying low energy. This study provides empirical evidence of how different AI models respond to the same prompt, revealing shared biases. Technologically, it highlights the urgent need to improve datasets and develop visual fairness metrics. Socially, it warns against reinforcing negative stereotypes when using AI-generated images in campaigns or publications. Although stylistic variations exist between models (more dramatic in some, more neutral in others), the common pattern is a reduction of aging to a homogeneous and limited representation. Using the Oosterhof & Todorov model, images occupy low valence and dominance zones, associated with perceptions of low warmth and limited competence, consistent with Fiske et al.’s framework [7]. Methodologically, integrating quantifiable perceptual dimensions (valence, dominance) allows overcoming traditional descriptive approaches, bringing visual analysis closer to the experimental rigor of social psychology and situating findings within a validated theoretical framework [8]. These results are situated within a transforming regulatory context: Article 95 of EU Regulation 2024/1689 (AI Act) [9], explicitly encourages the development of codes of conduct for AI systems with requirements for reliability, transparency, and respect for fundamental rights.

CONCLUSION

In conclusion, AI-generated images reproduce classical visual ageism biases, particularly the reduction of aging to fragility and passivity, without ethnic or role diversity. Solutions are therefore not only technical but also political and cultural: inclusive datasets, visual bias auditing protocols, and user-centered design approaches are urgently needed to promote a more plural, active, and dignified vision of aging.

REFERENCES

- Allen L, Xu W, Nishikitani M, Patil VA, Bradley D. Age bias in artificial intelligence (AI): a visual properties analysis of AI images of older versus younger people. Innov Aging. 2023; 7: 986.

- Linares-Lanzman J, Stypi?ska J, Rosales A. Generative ageism: when generative AI reinforces age stereotypes. COMeIN. 2025; 151.

- QuintoPiso.net. When generative AI reinforces age stereotypes. 2025.

- Oosterhof NN, Todorov A. The functional basis of face evaluation. PNAS. 2008; 105: 11087-11092.

- Mehrabian A. Nonverbal Communication. Chicago: Aldine-Atherton; 1972.

- Ekman P, Friesen WV. Facial Action Coding System. Palo Alto: Consulting Psychologists Press; 1978.

- Fiske ST, Cuddy AJC, Glick P. Universal dimensions of social cognition: warmth and competence. Trends Cogn Sci. 2007; 11: 77-83.

- Back MD, Stopfer JM, Vazire S, Gaddis S, Schmukle SC, Egloff B, et al. Facebook profiles reflect actual personality, not self-idealization. Psychol Sci. 2010; 21: 372-374.

- European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 on harmonized rules on artificial intelligence (AI Act). Official J European Union. 2024; L: 1-266.